H i Embed_base_v1

: Hancom InSpace Embedding Model (Base Type)

Developer: Hancom InSpace

Supported languages: Korean, English

Model Release Date: September 7th, 2025

Information

Introducing HiEmbed_base, a lightweight embedding model for vector search from Hancom InSpace.

This model demonstrates outstanding performance in both Korean and English text retrieval, efficiently supporting multilingual environments. When implementing RAG (Retrieval-Augmented Generation) in a business setting, it offers high competitiveness in terms of supported languages, model size, speed, and accuracy, allowing for the expectation of excellent results.

Through this model release, we aim to contribute to the activation of the domestic LLM and RAG ecosystem. We hope that many people will freely test it and apply it to various services.

This model was trained with the support of GPU infrastructure from Gyeongnam Technopark.

Training

Dataset: In-house built dataset (3 million entries)

HiEmbed_baseis designed to maximize multilingual performance in both Korean and English simultaneously. We constructed an optimized dataset through iterative experiments, and its main features are as follows.

Data Types

- KO: QA and documents in the fields of administration, law, news, finance, and science & technology.

- EN: Q&A data on various topics, including web searches and community Q&A.

Dataset Composition and Scale

- Structure: Utilizes a triplet structure of query, positive, and negative.

{

"query": "question or anchor sentence",

"pos": ["positive sample sentence 1"],

"neg": ["negative sample sentence 1"]

}

- Core Processing: Applied Hard Negative Sampling to enhance the model's discriminative ability.

- Final Scale: Training was conducted with a total of 3 million data entries constructed through the above process.

- Data Split:

- Training/Validation Ratio: 92% / 8%

- Training Data Language Ratio (KO:EN): 7:3 (Focused on strengthening Korean performance)

- Validation Data Language Ratio (KO:EN): 1:1 (For a balanced performance evaluation of both languages)

We have put a great deal of effort into the quality of the dataset and the balance of the training data to improve not only Korean but also multilingual support and performance, including English. After testing various ratios, we found that maintaining a 7:3 ratio during training yielded the best results.

Usage

Install

First, install the necessary libraries. For GPU support, ensure you have a compatible CUDA environment.

# Common libraries

pip install -U transformers onnx

# For using Optimum

# For GPU (CUDA)

pip install optimum[onnxruntime-gpu]

# For CPU only

pip install optimum[onnxruntime]

Note: Ensure that the installed version of onnxruntime-gpu is compatible with your system's CUDA version. If you encounter errors, you may need to manually install a specific version of onnxruntime-gpu that matches your CUDA environment.

Running the model on a GPU

Set the provider to CUDAExecutionProvider and specify the GPU index for the device.

from optimum.pipelines import pipeline

from transformers import AutoTokenizer

from optimum.onnxruntime import ORTModelForFeatureExtraction

model_path = "/onnx/.."

sentences = [

"경상남도는 산불 피해를 입은 4개 시·군에 대해 긴급 복구비 83억원을 지원하고, 주민 설명회를 통해 주택 복구 일정과 절차를 안내할 계획이라고 밝혔다.",

"한컴인스페이스(대표 최명진)는 기술성 평가를 통과하고 프리 IPO로 125억 원을 유치했으며, 이를 기반으로 연내 코스닥 상장 절차를 본격화할 계획이라고 밝혔다."

]

# 1. Load model with CUDAExecutionProvider

ort_model = ORTModelForFeatureExtraction.from_pretrained(

model_path,

provider="CUDAExecutionProvider",

local_files_only=True

)

tokenizer = AutoTokenizer.from_pretrained(model_path)

# 2. Create pipeline on a specific GPU

pipe = pipeline(

task="feature-extraction",

model=ort_model,

tokenizer=tokenizer,

device="cuda:0" # Target the first GPU

)

# 3. Get embeddings

embeddings = pipe(sentences)

print("Embeddings generated on GPU.")

Running the model on multiple GPUs

The optimum pipeline for ONNX Runtime does not automatically parallelize a single request across multiple GPUs. The common approach for multi-GPU usage is to run separate inference processes on different GPUs to handle batches in parallel.

You can achieve this by creating multiple pipelines, each assigned to a different GPU device.

from optimum.pipelines import pipeline

from transformers import AutoTokenizer

from optimum.onnxruntime import ORTModelForFeatureExtraction

model_path = "/onnx/.."

# Load the model once

ort_model = ORTModelForFeatureExtraction.from_pretrained(

model_path,

provider="CUDAExecutionProvider",

local_files_only=True

)

tokenizer = AutoTokenizer.from_pretrained(model_path)

# Create a pipeline for each GPU

pipe_gpu0 = pipeline(task="feature-extraction", model=ort_model, tokenizer=tokenizer, device="cuda:0")

pipe_gpu1 = pipeline(task="feature-extraction", model=ort_model, tokenizer=tokenizer, device="cuda:1")

# Process different data on each pipeline (e.g., in separate threads/processes)

sentences_batch1 = ["경상남도는 산불 피해를 입은 4개 시·군에 대해 긴급 복구비 83억원을 지원하고, 주민 설명회를 통해 주택 복구 일정과 절차를 안내할 계획이라고 밝혔다."]

sentences_batch2 = ["한컴인스페이스(대표 최명진)는 기술성 평가를 통과하고 프리 IPO로 125억 원을 유치했으며, 이를 기반으로 연내 코스닥 상장 절차를 본격화할 계획이라고 밝혔다."]

embeddings1 = pipe_gpu0(sentences_batch1)

embeddings2 = pipe_gpu1(sentences_batch2)

print("Batch 1 processed on cuda:0.")

print("Batch 2 processed on cuda:1.")

Benchmark

Korean Leaderboard

| Model | Korean | KLUE-STS | KLUE-TC | Ko-StrategyQA | KorSTS | AutoRAGRetrieval | XPQARetrieval |

|---|---|---|---|---|---|---|---|

| bge-m3 | 69.2 | 87.71 | 55.5 | 79.4 | 80.26 | 83.01 | 29.33 |

| BGE-m3-ko | 70.72 | 88.65 | 55.35 | 79.59 | 81.59 | 87.38 | 31.8 |

| HancomInSapce_HiEmbed_base | 71.53 | 87.98 | 61.79 | 79.92 | 81.75 | 85.62 | 32.13 |

| ibm-granite_granite-embedding-107m-multilingual | 57.95 | 73.25 | 48.23 | 70.53 | 70.6 | 68.24 | 16.83 |

| intfloat_multilingual-e5-base | 64.41 | 77.71 | 59.74 | 75.45 | 75.2 | 77.66 | 20.7 |

| intfloat_multilingual-e5-large | 68.14 | 81.58 | 62.09 | 79.82 | 79.24 | 80.66 | 25.45 |

| intfloat_multilingual-e5-large-instruct | 67.11 | 86.98 | 63.61 | 75.68 | 79.64 | 68.08 | 28.64 |

| KURE-v1 | 71.44 | 87.74 | 61.32 | 79.99 | 81.25 | 87.08 | 31.25 |

| sentence-transformers_all-MiniLM-L6-v2 | 15.67 | 22.36 | 19.84 | 1.41 | 42.3 | 6.22 | 1.9 |

| Snowflake_snowflake-arctic-embed-l-v2.0 | 68.69 | 82.55 | 58.98 | 80.46 | 73.81 | 83.86 | 32.47 |

| Snowflake_snowflake-arctic-embed-m | 20.96 | 38.52 | 19.57 | 4.57 | 39.1 | 19.19 | 4.8 |

| Snowflake_snowflake-arctic-embed-s | 17.64 | 26.17 | 20.5 | 4.89 | 33.36 | 16.91 | 4 |

English Leaderboard

| Model | English | Retrieval | STS | Classification | Clustering | PairClassification | Reranking |

|---|---|---|---|---|---|---|---|

| bge-m3 | 28.5 | 54.42 | 80.44 | 63.68 | 42.04 | 84.48 | 55.27 |

| BGE-m3-ko | 28.66 | 55.83 | 81.18 | 63.64 | 43.49 | 83.95 | 55.2 |

| HancomInSapce_HiEmbed_base | 29.07 | 56.22 | 81.37 | 64.45 | 44.83 | 84.8 | 56.21 |

| ibm-granite_granite-embedding-107m-multilingual | 26.3 | 44.77 | 72.55 | 54.26 | 41.82 | 80.29 | 55.59 |

| intfloat_multilingual-e5-base | 27.75 | 48.8 | 75.98 | 61.34 | 44.22 | 83.74 | 54.16 |

| intfloat_multilingual-e5-large | 28.28 | 52.29 | 78.97 | 61.66 | 45.52 | 84.32 | 54.67 |

| intfloat_multilingual-e5-large-instruct | 29.05 | 50.29 | 81 | 62.67 | 49.9 | 82.12 | 55.32 |

| KURE-v1 | 28.77 | 55.8 | 80.74 | 64.16 | 44.12 | 84.56 | 55.71 |

| sentence-transformers_all-MiniLM-L6-v2 | 27.11 | 17.54 | 51.56 | 51.86 | 46.22 | 82.37 | 58.04 |

| Snowflake_snowflake-arctic-embed-l-v2.0 | 28.75 | 57.6 | 75.68 | 60.06 | 47.58 | 83 | 57 |

| Snowflake_snowflake-arctic-embed-m | 26.58 | 22.95 | 51.23 | 49.39 | 47.65 | 75.83 | 57.88 |

| Snowflake_snowflake-arctic-embed-s | 26.87 | 21.5 | 48.07 | 52.19 | 46.79 | 80.11 | 55.93 |

Overall Leaderboard

| Model | Overall | Korean | English | Retrieval | STS | Classification | Clustering | PairClassification | Reranking |

|---|---|---|---|---|---|---|---|---|---|

| bge-m3 | 63.39 | 69.2 | 28.5 | 54.42 | 80.44 | 63.68 | 42.04 | 84.48 | 55.27 |

| BGE-m3-ko | 63.88 | 70.72 | 28.66 | 55.83 | 81.18 | 63.64 | 43.49 | 83.95 | 55.2 |

| HancomInSapce_HiEmbed_base | 64.65 | 71.53 | 29.07 | 56.22 | 81.37 | 64.45 | 44.83 | 84.8 | 56.21 |

| ibm-granite_granite-embedding-107m-multilingual | 58.21 | 57.95 | 26.3 | 44.77 | 72.55 | 54.26 | 41.82 | 80.29 | 55.59 |

| intfloat_multilingual-e5-base | 61.37 | 64.41 | 27.75 | 48.8 | 75.98 | 61.34 | 44.22 | 83.74 | 54.16 |

| intfloat_multilingual-e5-large | 62.91 | 68.14 | 28.28 | 52.29 | 78.97 | 61.66 | 45.52 | 84.32 | 54.67 |

| intfloat_multilingual-e5-large-instruct | 63.55 | 67.11 | 29.05 | 50.29 | 81 | 62.67 | 49.9 | 82.12 | 55.32 |

| KURE-v1 | 64.18 | 71.44 | 28.77 | 55.8 | 80.74 | 64.16 | 44.12 | 84.56 | 55.71 |

| sentence-transformers_all-MiniLM-L6-v2 | 51.27 | 15.67 | 27.11 | 17.54 | 51.56 | 51.86 | 46.22 | 82.37 | 58.04 |

| Snowflake_snowflake-arctic-embed-l-v2.0 | 63.49 | 68.69 | 28.75 | 57.6 | 75.68 | 60.06 | 47.58 | 83 | 57 |

| Snowflake_snowflake-arctic-embed-m | 50.82 | 20.96 | 26.58 | 22.95 | 51.23 | 49.39 | 47.65 | 75.83 | 57.88 |

| Snowflake_snowflake-arctic-embed-s | 50.76 | 17.64 | 26.87 | 21.5 | 48.07 | 52.19 | 46.79 | 80.11 | 55.93 |

About us

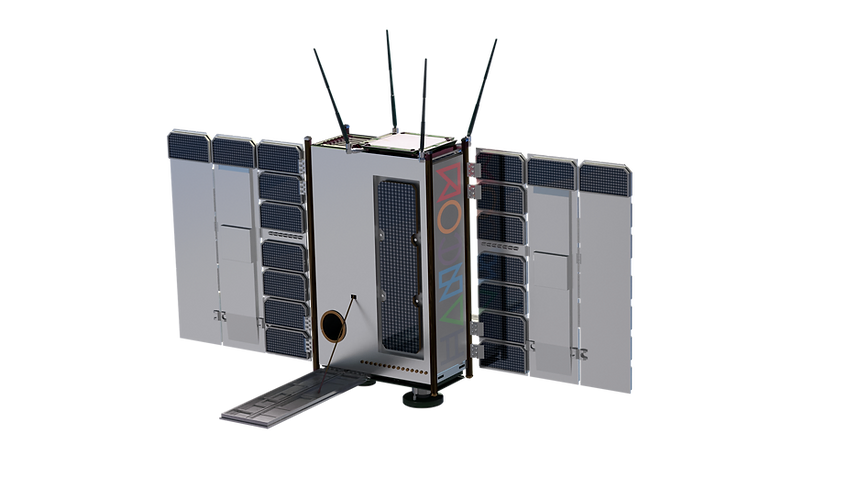

Caption

@misc{

title={HiEmbed_base_v1: Hancom InSpace Embedding Model (Base Type)},

author={JoChanho, KimHajeong},

year={2025}

}

- Downloads last month

- 22